|

|

|

Haptics, computer interface technology that mediates the sense of touch, is a rapidly growing field with applications in areas ranging from tele-robotics, entertainment, and education to realistic simulators for training and planning of complex surgical procedures. The Centre for Image Analysis has conducted haptics research for more than a decade, with research and method development resulting in systems (softaware and hardware) that includes haptics-assisted assembly of fractured objects, interactive segmentation of medical images, and cranio-maxillofacial (CMF) surgery planning.

We are developing a system for planning the restoration of skeletal anatomy in facial trauma patients using a virtual model derived from patient-specific CT data. The system combines stereo visualization with six degrees-of-freedom, high fidelity haptic feedback that enables analysis, planning, and preoperative testing of alternative solutions for restoring bone fragments to their proper positions.

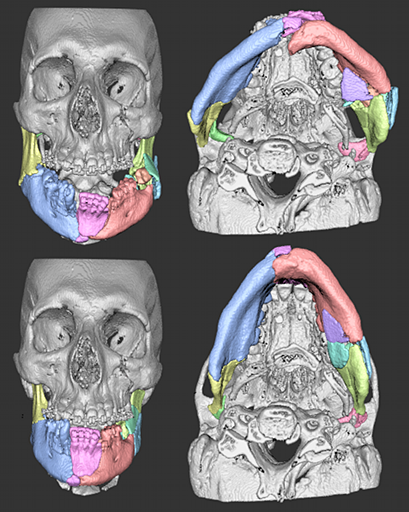

We have developed an efficient interactive tool for segmenting individual bones and bone fragments in 3D computed tomography (CT) images. BoneSplit combines direct volume rendering with interactive 3D texture painting to enable quick identification and marking of, for example, bone structures. The user can paint markers (seeds) directly on the rendered bone surfaces as well as on individual CT slices. Separation of the marked bones is then achieved through the random walks algorithm, which is applied on a graph constructed from the thresholded bones.

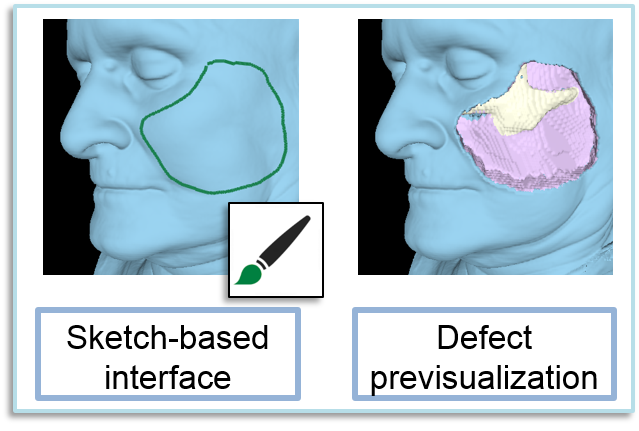

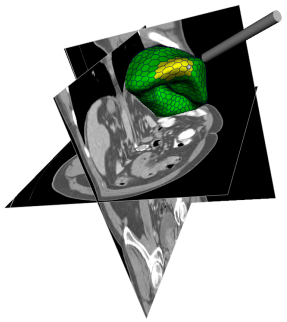

Resection of soft tissue is commonly performed in head and neck cancer surgery. By removing the tumour, a defect in the face is created consisting of dfferent tissue layers (e.g., skin, fat, muscle, or bone). To reconstruct this defect it is usually needed to transplant vascularised tissue from other parts of the body, typically the thigh. We have developed a surgical planning software that can help the surgeons estimate the size and shape of a soft tissue resection, based on patient-specfic computed tomography (CT) data.

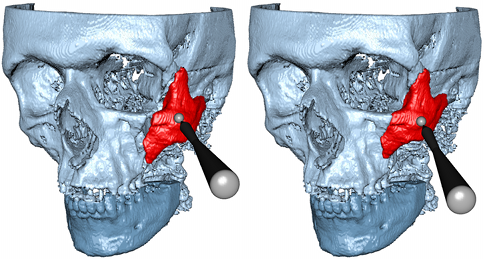

Precise fitting of fractured objects guided by delicate haptic cues similar to those present in the physical world requires haptic display transparency beyond the capabilites of today's systems. We have developed a haptic alignment tool that combines a six degrees-of-freedom (DOF) attraction force with traditional six DOF contact forces to pull a virtual object towards a local stable fit with a fixed object.

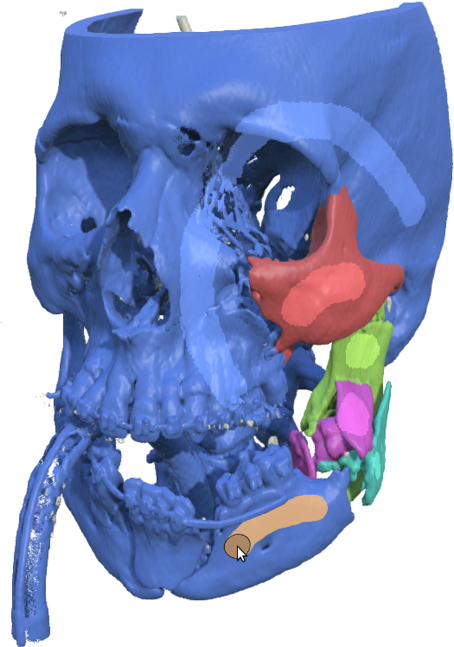

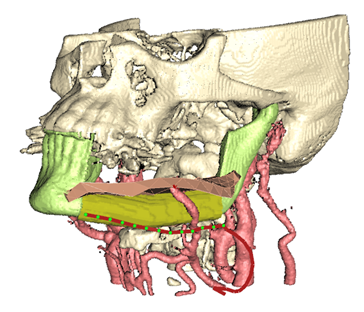

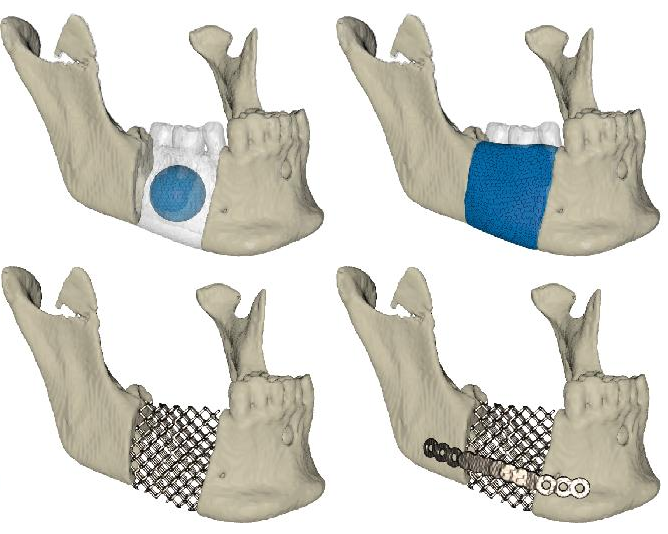

HASP is based on fast interactive segmentation. We demonstrate a planning session in the HASP system of a mandibular (lower jaw) reconstruction using bone segments grafted from the patient's fibula. A surgeon may interactively and iteratively design an optimal reconstruction by defining and refining fibula osteotomies, anastomosis sites, and configuration of a skin paddle to cover soft tissue deficits.

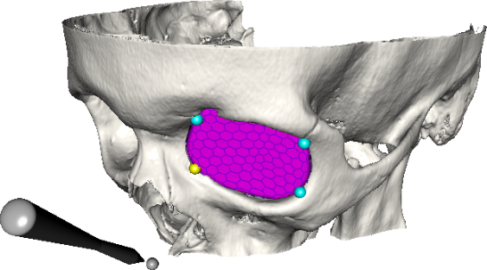

One important component in surgery planning is to accurately measure of the extent of certain anatomical structures, such as the shape and volume of the orbits (eye sockets). These properties can be measured in 3D computed tomography (CT) images of the skull, which requires the segmentation of the orbits from the rest of the image. In this project, we are developing a semiautomatic system for segmenting the orbit.

The WISH toolkit was the first project in the haptics era of CBA. WISH comprises algorithms and methods for interactive medical image analysis using volume visualization and haptics. The toolkit is licensed under the GNU public license (GPL). The core of WISH is a stand-alone C++ library with implementations of image analysis algorithms, visualization algorithms, and haptic rendering algorithms, integrated with the H3D API from SenseGraphics AB.

We propose a semi-automatic method based on deformable models and haptics that allows a surgeon to easily design, adjust, and virtually test the fit of a scaffold implant before production.

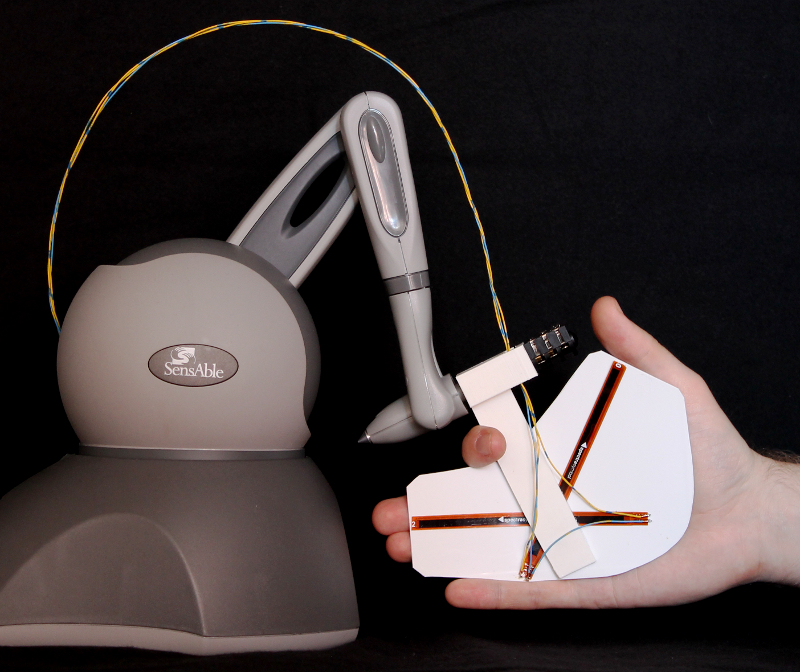

SplineGrip is a flexible haptic sculpting tool that senses the articulation of the hand in two degrees-of-freedom (DOF). The tool is mounted on a commercial haptic device that tracks hand pose (position and orientation in six DOF) while simultaneously providing three DOF haptic feedback to the hand.

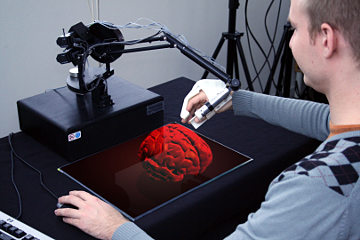

Co-located haptics will gain importance when more advanced haptic interfaces, such as high-fidelity whole hand devices, become available. We have investigated the pros and cons with physically co-located versus non-collocated haptics on stereoscopic displays.

Our long term goal is a new interaction paradigm that gives the user an unprecedented experience to touch and manipulate high contrast, high resolution, three-dimensional (3D) virtual objects suspended in space, using a glove that gives such realistic whole hand haptic feedback that the interaction closely resembles interaction with real objects using a bare hand.

We have conducted studies on how to generate tactile stimulation to the fingertip.