|

Haptics and its Applications to Medicine

Ingrid Carlbom, Pontus Olsson, Fredrik Nysjö

Partners: Stefan Johansson (Division of Microsystems Technology, UU and Teknovest AB); Jan-Michaél Hirsch, Dept. of Surgical Sciences, Oral & Maxillofacial Surgery, UU and Consultant at Dept. of Plastic- and Maxillofacial Surgery, UU Hospital; Andreas Thor, Dept. of Surgical Sciences, Oral & Maxillofacial Surgery, UU Hospital; Andres Rodriguez Lorenzo, Dept. of Surgical Sciences, Plastic Surgery, UU Hospital; PiezoMotors AB.

Funding: Dept. of Surgical Sciences, Oral & Maxillofacial Surgery, UU Hospital; Thuréus Stiftelsen

Period: 1301-

Abstract: This year we augmented the Uppsala Haptics-Assisted Surgery Planning (HASP) system with virtual reconstruction of head and neck defects by fibula osteocutaneous free flaps (FOFF), including bone, vessels, and soft-tissue of the FOFF in the defect reconstruction. With the HASP stereo graphics and haptic feedback, using patient-specific CT-angio data, the surgeons can plan bone resection, fibula design, recipient vessels selection, pedicle and perforator location selection, and skin-paddle configuration.

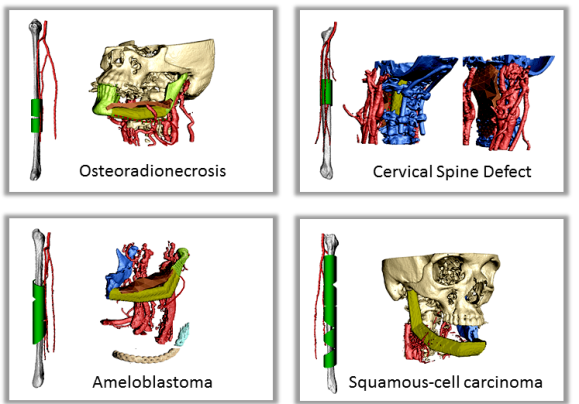

Two surgeons tested HASP on four cases they had previously operated: three with composite mandibular defects and one with a composite cervical spine defect (Figure 10). During the planning session, it became apparent that some problems encountered during the actual surgery could have been avoided. In one case, the fibula reconstruction was incomplete since the fibula had to be reversed and thus did not reach the temporal fossa. In another case, the fibula had to be rotated 180 degrees to correct the plate and screw placement in relation to the perforator. In the spinal case, difficulty in finding the optimal fibula shape and position required extra ischemia time. The surgeons found HASP to be an efficient planning tool for FOFF reconstructions. The testing of alternative reconstructions to arrive at an optimal FOFF solution preoperatively potentially improves patient healing, function and aesthetics, and reduces operating room time.

|

ProViz - Interactive Visualization of 3D Protein Images

Lennart Svensson, Ida-Maria Sintorn, Ingela Nyström, Fredrik Nysjö, Johan Nysjö, Anders Brun, Gunilla Borgefors

Partners: Dept. of Cell and Molecular Biology, Karolinska Institute; SenseGraphics AB

Funding: The Visualization Program by Knowledge Foundation; Vaardal Foundation; Foundation for Strategic Research; VINNOVA; Invest in Sweden Agency; SLU, faculty funding

Period: 0807-1412

Abstract: Electron tomography is the only microscopy technique that allows 3-D imaging of biological samples at nano-meter resolution. It thus enables studies of both the dynamics of proteins and individual macromolecular structures in tissue. However, the electron tomography images have a low signal-to-noise ratio, which makes image analysis methods an important tool in interpreting the images. The ProViz project aims at developing visualization and analysis methods in this area.

The project focus 2014 has been on finalizing and testing the methods and software as well as preparing the manuscript describing and demonstrating the ProViz software. Lennart Svensson defended his PhD thesis very closely linked to this project.

Analysis and Processing of Three-Dimensional Magnetic Resonance Images on Optimal Lattices

Elisabeth Linnér, Robin Strand

Funding: TN-faculty, UU

Period: 1005-

Abstract: Three-dimensional images are widely used in, for example, health care. With optimal sampling lattices, the amount of data can be reduced by 20-30% without affecting the image quality, lowering the demands on the hardware used to store and process the images, and reducing processing time.

In this project, methods for image acquisition, analysis and visualization using optimal sampling lattices are studied and developed, with special focus on medical applications. The intention is that this project will lead to faster and better processing of images with less demands on data storage capacity. One of the goals of the project is to release open source software for producing, processing, analyzing and visualizing volume images sampled on BCC and FCC lattices, so as to make them readily available for potential users to explore on their own. During 2014, the focus has been on distance transforms. Two reviewed conference papers exploring a graph-based implementation of the anti-aliased Euclidean distance transform have been published, and the work on the open source software is progressing.

Registration of Medical Volume Images

Robin Strand, Filip Malmberg

Partner: Joel Kullberg, Håkan Ahlström, Dept. of Radiology, Oncology and Radiation Science, UU

Funding: Faculty of Medicine, UU

Period: 1208-

Abstract: In this project, we mainly process magnetic resonance tomography (MR) images. MR images are very useful in clinical use and in medical research, e.g., for analyzing the composition of the human body. At the division of Radiology, UU, a huge amount of MR data, including whole body MR images is acquired for research on the connection between the composition of the human body and disease.

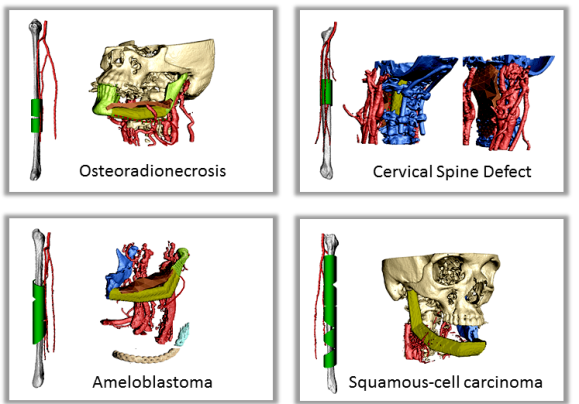

To compare volume images voxel by voxel, we develop image registration methods. For example, large scale analysis is enabled by image registration methods that utilizes, for example, segmented tissue (e.g., Project 22) and anatomical landmarks. Based on this idea, we have developed Imiomics (imaging omics) - an image analysis concept, including image registration, that allows statistical and holistic analysis of whole-body image data (Figure 11). The Imiomics concept is holistic in three respects: (i) The whole body is analyzed, (ii) All collected image data is used in the analysis and (iii) It allows integration of all other collected non-imaging patient information in the analysis.

|

At CBA, we have been developing powerful new methods for interactive image segmentation. In this project, We seek to employ these methods for segmentation of medical images, in collaboration with the Dept. of Radiology, Oncology and Radiation Science (ROS) at the UU Hospital.

During 2014, we published an article describing Smartpaint, a tool for interactive segmentation of volume images. The SmartPaint software is publicly available and can be downloaded from http://www.cb.uu.se/~filip/SmartPaint/. To date, this software has been downloaded more than 500 times.

Orbit Segmentation for Cranio-Maxillofacial Surgery Planning

Johan Nysjö, Ida-Maria Sintorn, Ingela Nyström, Filip Malmberg

Partners: Jan Michael Hirsch, Andreas Thor, Johanna Nilsson, Dept. of Surgical Sciences, UU Hospital; Roman Khonsari, Pitie Salpetriere Hospital, Paris, France; Jonathan Britto, Great Ormond Street Hospital, London, UK

Funding: TN-faculty, UU

Period: 0912-

Abstract: An important component in cranio-maxillofacial (CMF) surgery planning is to be able to accurately measure the extent of certain anatomical structures. The shape and volume of the orbits (eye sockets) are of particular interest. These properties can be measured in CT images of the skull, but this requires accurate segmentation of the orbits. Today, segmentation is usually performed by manual tracing of the orbit in a large number of slices of the CT image. This task is very time-consuming, and sensitive to operator errors. Semi-automatic segmentation methods could reduce the required operator time substantially. In this project, we are developing a prototype of a semi-automatic system for segmenting the orbit in CT images. The segmentation system is based on WISH, a software package for interactive visualization and segmentation that has been developed at CBA since 2003. WISH has been released under an open-source license and is available for download at http://www.cb.uu.se/research/haptics.

Our main focus during 2014 has been to continue our collaboration with surgeons from the Craniofacial Centre at Great Ormond Street Hospital, London, UK, in a project that aims to analyse the size and shape of the orbits in a large set of pre- and post-operative CT images of patients with congenital disorders. Our semi-automatic segmentation system has been used to rapidly segment the orbits in these datasets, and we have extended the system with automatic registration-based techniques for performing size and shape analysis of the segmented orbits. Abstracts about the ongoing work have been presented at medical conferences. We completed the size and shape analysis experiments for the study during the autumn and have now started to summarize the results in manuscripts.

Precise 3D Angle Measurements in CT Wrist Images

Johan Nysjö, Filip Malmberg, Ingela Nyström, Ida-Maria Sintorn

Partners: Albert Christersson, Sune Larsson, Dept. of Orthopedics, UU Hospital

Funding: TN-faculty, UU

Period: 1111-

Abstract: To be able to decide the correct treatment of a fracture, orthopedic surgeons need to assess the details about the fracture. One of the most important factors is the fracture displacement, particularly the angulation of the fracture. The wrist is the most common location for fractures in the human being. When a fracture is located close to a joint, for example, in the wrist, the angulation of the joint line in relation to the long axis of the long bone needs to be measured. Since the surface of the joint line in the wrist is highly irregular, and since it is difficult to take X-rays of the wrist in exactly the same projections from time to time, conventional X-ray is not an optimal method for this purpose. In most clinical cases, conventional 2D angle measurements in X-ray images are satisfactory for making correct decisions about treatment, but when comparing two different methods of treatment, for instance, two different operation techniques, the accuracy and precision of the angle measurements need to be higher.

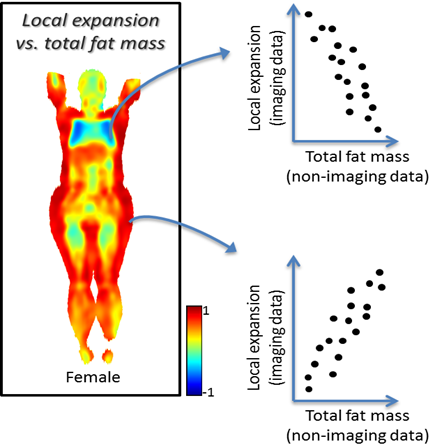

In this project, we are developing a system for performing precise 3D angle measurements in computed tomography (CT) images of the wrist. Our proposed system is semi-automatic; the user is required to guide the system by indicating the approximate position and orientation of various parts of the radius bone. This information is subsequently used as input to an automatic algorithm that makes precise angle measurements. We have developed a RANSAC-based method for estimating the long axis of the radius bone and a registration-based method (Figure 12) for measuring the orientation of the joint surface of the radius. Preliminary evaluations have shown that these two methods together enable relative measurements of the dorsal angle in the wrist with sub-millimeter precision. During 2014, we performed a more extensive case study (involving 40 CT scan sequences of fractures wrists) to further evaluate the performance of our 3D angle measurement method and compare it with the conventional 2D X-ray measurement method. A manuscript about this study is under preparation.

Skeleton-Based Vascular Segmentation at Interactive Speed

Kristína Lidayová, Hans Frimmel, Ewert Bengtsson

Partners: Örjan Smedby, Chunliang Wang, Center for Medical Image Science and Visualization (CMIV), Linköping University

Funding: VR grant to Örjan Smedby

Period: 1207-

Abstract: Precise segmentation of vascular structures is crucial for studying the effect of stenoses on arterial blood flow. The goal of this project is to develop and evaluate vascular segmentation, which will be fast enough to permit interactive clinical use. The first part is the extraction of the centerline tree (skeleton) from the gray-scale CT image. Later this skeleton is used as a seed region. The method should offer sub-voxel accuracy.

During 2013, we improved the software for fast vessel centerline tree extraction. The method has been tested on several CT data and the results look promissing. Generally main vessel centerlines are detected, but an improvement needs to be done in order to remove some false positive centerlines.

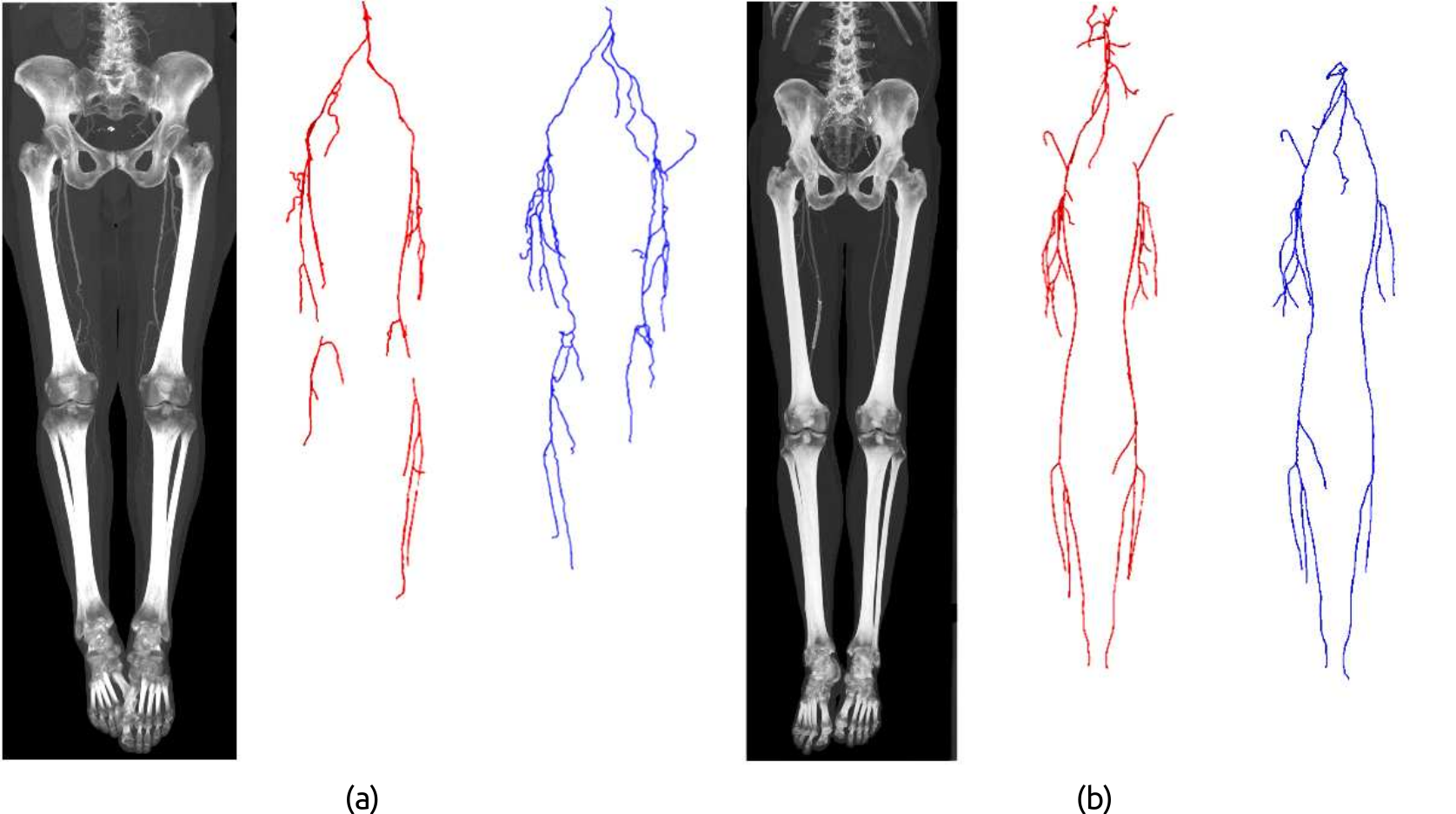

In year 2014, we improved the software to its final stage. It works on the original Computed Tomography Angiography (CTA) image as the input and produces a node-link representation of the vascular structures for the lower limbs. The method (Figure 13) works in two passes: first pass extracts the skeleton of large arteries, and second pass focus on extracting small arteries. Each pass contains three major steps: (1) sets proper intensity ranges for different anatomy structures based on Gaussian curve fitting to the image histogram; (2) apply different filters to detect voxels that are part of arteries, where filters are designed based on intensity and size analysis of ellipse shape on 2-D planes; (3) connect nodes to obtain a centerline tree for the entire vasculature. The method has been tested on 25 CTA scans of the lower limbs (Figure 14) and achieved an average of 96% overlap rate with ground truth. The average computational time is 121 sec/scan.

A paper summarizing this work was written and was sent to a Special issue of Pattern Recognition Letters on skeletonization and its applications. At the current stage the paper is under major revision. The work was pressented at SSBA conference and Medicinteknikdagarna.

|

|

Ubiquitous Visualization in the Built Environment

Stefan Seipel, Fei Liu

Partner: Dept. of Industrial Development, IT and Land Management, University of Gävle

Funding: University of Gävle; TN-faculty, UU

Period: 1108-

Abstract: This project deals with mobile visualization and augmented reality (AR) in indoor and outdoor environments. Several key problems for robust mobile visualization are addressed such as spatial tracking and calibration; image based 2D and 3D registration and efficient graphical representations in mobile user interfaces.

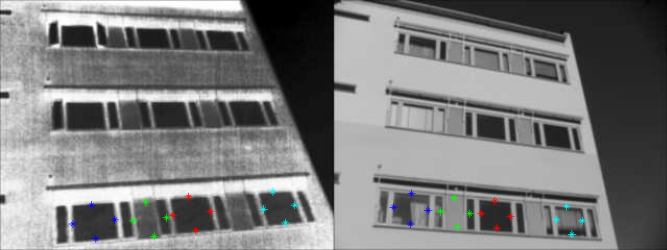

During 2014, two major lines of work have been carried out: Registration of thermal infrared and visible facade images for augmented reality-based building inspection. Here, the problem of multi-modal image registration is addressed through identification of high-level (quadrilateral) features which model the shapes of commonly present facade elements, such as windows (Figure 15). These features are generated by grouping edge line segments with the help of image perspective information, namely, vanishing points. Our method adopts a forward selection algorithm for selecting feature correspondences needed to estimate the transformation model (Figure 16). During the formation of the feature correspondence set, the correctness of selected feature correspondences at each step is verified through the quality of the resulting registration, which is based on the ratio of areas between the transformed features and the reference features. Part of this work has been published in the Journal of Image and Graphics. Other results of this work are currently prepared for publication.

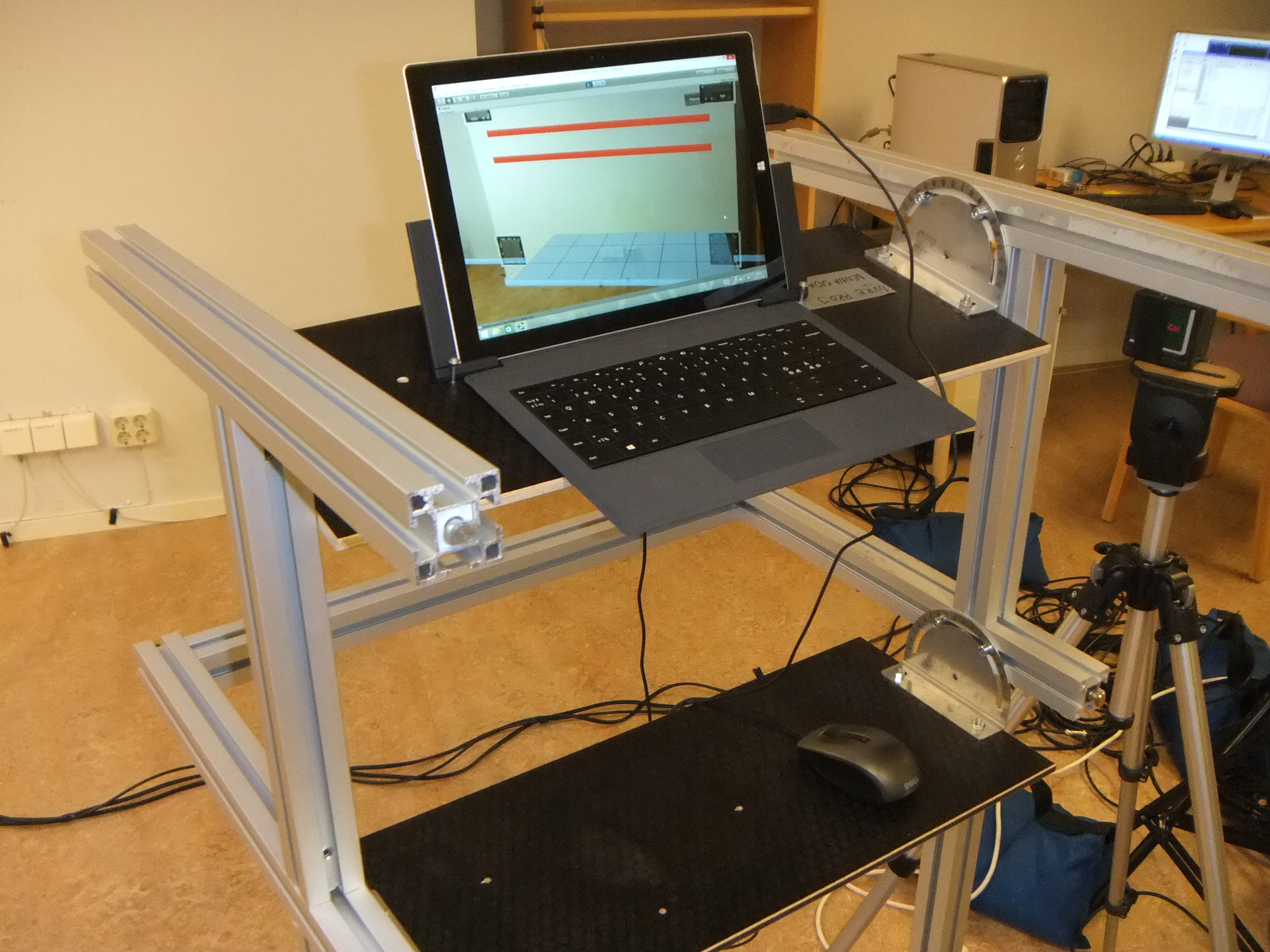

Another activity in the project has been the development of a video-see through augmented reality system along with an experimental study on positioning accuracy in indoor augmented reality. In this work, a marker-based augmented reality application has been developed which displays construction elements (pipes) hidden inside walls as virtual models that are visually overlaid in real time upon video images of the real wall (Figure 17 (left)). Using this application, we conducted a user experiment to find out how precise the localization of hidden structures in walls could be performed by the use of a video-see through augmented reality guidance system. Another objective was to investigate different factors that are influential on positioning accuracy, such as e.g. visual parallax and method for targeting (Figure 17 (right)). Experiment results have been gathered and as of now they are analyzed and prepared for publication.

|

|

|